- Blog

- About

- Contact

- Cars fast as lightning online hack

- Best logitech gaming keyboard for mac

- Wondershare allmytube v4-9-1-1 multilingual

- How to install sims 4 from disc without origin key

- 3d studio free download full version

- Blank checklist template word pdf

- Minecraft single player free download pc

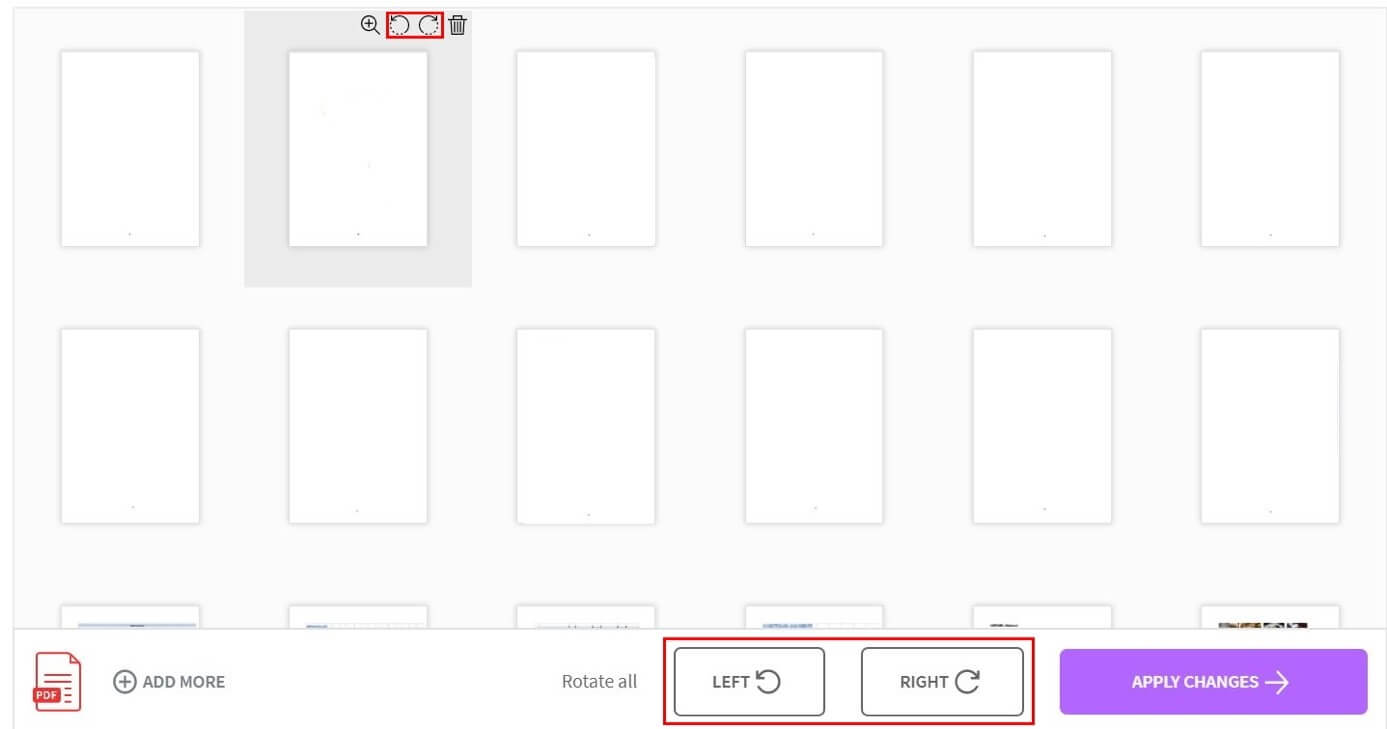

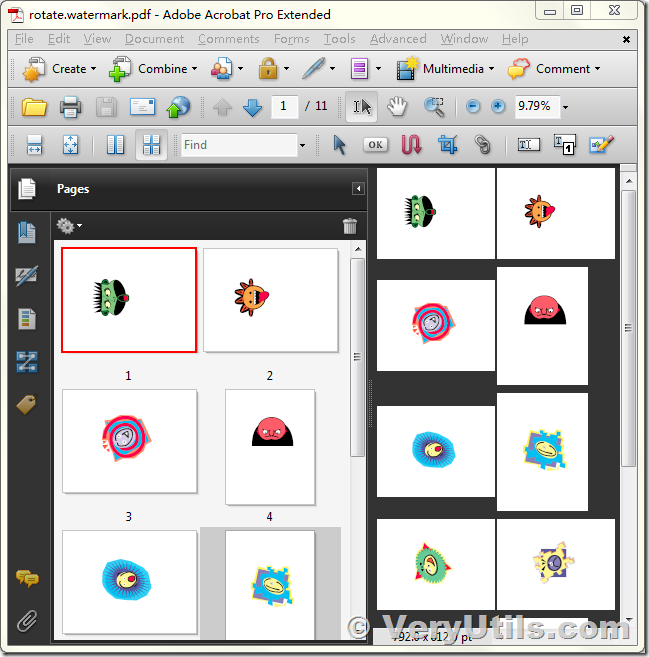

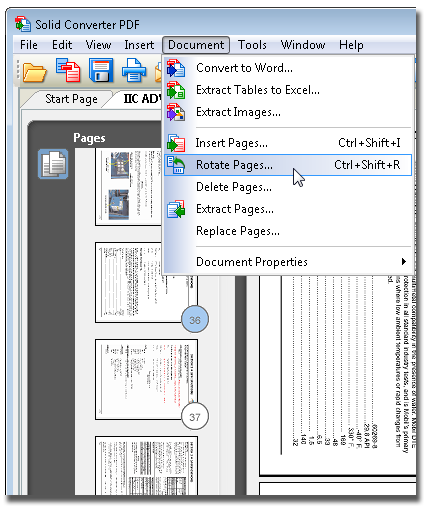

- Rotate pdf page slightly

- Reviews of ebay sniper software

Please suggest modifications in this approaches to make it more robust.

Here is the final image with correct orientation Text orientation detection plays the key role here in overall document orientation detection so based on document type a few small tweaks should be made in the text detection algorithm to make this approach work better. Here is Image with detected text regions, from this we can see that some of the text regions are missing. Print("Image orientation in degress: ", orientation)ĭisplay(textImg, "Detectd Text minimum bounding box")

#find the average of all cumulative theta value #this will help in excluding the rare text region with different orientation from ususla value #we can filter theta as outlier based on other theta values #assume at least 45% of the area is filled if it contains text R = float(cv2.countNonZero(mask)) / (w * h) #ratio of non-zero pixels in the filled region Mask = np.zeros(bw.shape, dtype=np.uint8) # _, contours, hierarchy = cv2.findContours(py(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_NONE)Ĭontours, hierarchy = cv2.findContours(py(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_NONE) #opencv >= 4.0 # using RETR_EXTERNAL instead of RETR_CCOMP Kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (9, 1))Ĭonnected = cv2.morphologyEx(bw, cv2.MORPH_CLOSE, kernel) #kernal value (9,1) can be changed to improved the text detection Grad = cv2.morphologyEx(small, cv2.MORPH_GRADIENT, kernel) Kernel = cv2.getStructuringElement(cv2.MORPH_ELLIPSE, (3, 3)) Small = cv2.cvtColor(textImg, cv2.COLOR_BGR2GRAY) # rotate orignal image to show transformation M = cv2.getRotationMatrix2D(image_center,theta,1)īound_w = int(rows * abs_sin + cols * abs_cos)īound_h = int(rows * abs_cos + cols * abs_sin) #Assumption: Document image contains all text in same orientationĭef display(img, frameName="OpenCV Image"): #This approach is based on text orientation Please note that this only works if all the text present in image have same orientation.

For text region detection i am using gradient map of input image.Īll other implementation details are commented in the code. I am sharing one of the approaches based on text orientation. This is an interesting problem, i have tried with many approaches to correct orientation of document images but all of them have got different exceptions. I want to make a script that gets an image like this one(don't mind the image its for testing purposes)Īnd rotates it in the right way so I get a correctly orientated image. Img_rotated = ndimage.rotate(img_before, median_angle) Img_edges = cv2.Canny(img_gray, 100, 100, apertureSize=3) Img_gray = cv2.cvtColor(img_before, cv2.COLOR_BGR2GRAY)

Right now I look for a line and it rotates the first line it sees correctly but this barely works except for a few images img_before = cv2.imread('rotated_377.jpg') Right now my script is unreliable and isn't that precise. I am making a script that repairs scanned documents and i now need a way to detect the image orientation and rotate the image so its rotation is correct.